Do GEO Tactics Actually Work? Here's What 8 Studies Found

The Problem With Most GEO Advice

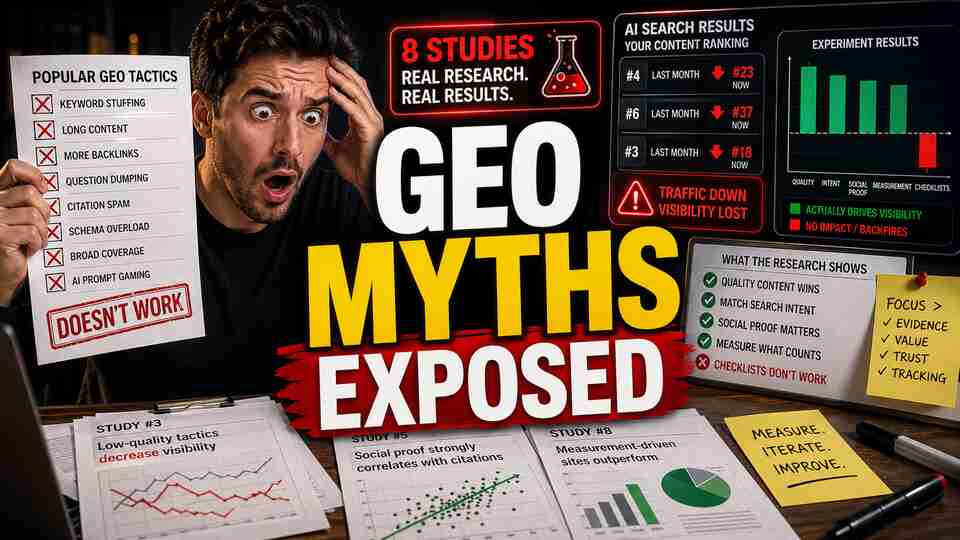

Most GEO guides are written by marketers, not researchers. They recommend tactics based on intuition, anecdote, or reverse-engineering a few AI responses. That's fine for generating content, but it means the advice has never been stress-tested against real data at scale.

In the past 18 months, several research groups have actually done that stress-testing. The results are uncomfortable for anyone selling a GEO checklist. Most common tactics either don't work or actively hurt performance. Measurement itself turns out to be fundamentally unreliable. And the strategies that do show promise require infrastructure that most teams don't have.

Here's what eight peer-reviewed and preprint studies found, and what it means for how you should actually approach AI search visibility.

1. Dedicated Manipulation Tactics Hurt More Than They Help

The most striking result came from C-SEO Bench, accepted at NeurIPS 2025. Researchers tested dedicated C-SEO manipulation tactics across ChatGPT Search, Perplexity, Google AI Overviews, and Amazon Rufus. The finding was blunt: most tactics are not just ineffective, they actively harm rankings.

The researchers also identified a structural problem they called zero-sum congestion. When all players in a space adopt the same tactics simultaneously, collective gains collapse to zero. The tactics become ambient noise that LLMs learn to discount. You end up in an arms race that nobody wins.

What held up instead? Traditional content quality: structured, accurate, well-organized writing. Not because AI systems are naive, but because high-quality content signals are harder to fake and more durable across model updates.

This is peer-reviewed, NeurIPS-level research. It is not an industry opinion piece.

2. 10 of 15 Common GEO Heuristics Do Not Work

The E-GEO study out of MIT (November 2025) tested 15 widely-cited GEO heuristics across 7,151 queries and 52,165 Amazon product listings. Ten of the fifteen produced negligible or negative results.

The worst single performer was storytelling framing, which produced an average ranking drop of 4.03 positions. This is a tactic that appears in multiple popular GEO guides as a recommended approach.

What actually moved the needle: user intent alignment, competitive differentiation, social proof (real reviews and ratings), and factual accuracy. None of those are novel. They are the same principles that have driven good content for years.

The MIT team also ran GPT-4o as a meta-optimizer to search for universally applicable strategies. It found one that generalized across diverse prompts. The interesting part is what that convergence implies: there may be a small set of genuinely effective signals, but they are not the ones being taught in most GEO courses.

3. Being Cited Does Not Mean You Are Shaping the Answer

CC-GSEO-Bench (September 2025, 1,030 articles, 5,353 query-article pairs) introduced a distinction that most GEO practitioners ignore: citation frequency versus causal influence. The correlation between the two is r = 0.019, essentially zero.

In plain terms: an AI might cite your article while drawing none of its reasoning from it. Tracking citations without understanding whether those citations shape the actual response is measuring the wrong thing entirely.

The study also found that quotes are the most effective single strategy across dimensions. But statistics are a double-edged tool: they boost perceived causal impact but degrade trustworthiness scores. That tradeoff is not visible if you are only tracking whether you got cited.

This is exactly why source gap analysis matters. Citation counts do not tell you whether you are influencing how AI systems reason about your category. You need to know what role your content is actually playing in the generated answer.

4. Systematic Optimization Works, But It Is Not Guesswork

AutoGEO (CMU, October 2025) is the most optimistic result in this set, and it is worth understanding precisely what it found. The AutoGEO framework learns generative engine preferences automatically and achieved 35.99% average improvement in GEO visibility metrics.

That sounds like a strong endorsement of GEO optimization. But the key word is "automatically." The framework works by systematically learning what different engines prefer, not by applying a fixed list of tactics. It is preference learning over many iterations, not a checklist you run once.

This validates that optimization is possible. It also explains why ad hoc tactics fail: without a systematic feedback loop, you are guessing at what a black-box model rewards, and those preferences shift across engines, query types, and model versions. BabyPenguin's prompt-level tracking across ChatGPT, Gemini, and Grok is built around exactly this kind of systematic feedback rather than one-time audits.

5. Previous Benchmarks Used Unrealistic Conditions

SAGEO Arena (February 2026) is the first GEO benchmark designed around realistic query types. Its main finding: many of the positive GEO results reported in prior research do not hold when tested under realistic conditions.

This matters because a significant portion of the GEO literature, and by extension a significant portion of GEO advice, was built on benchmarks that do not reflect how real users actually query AI systems. Results that looked promising in controlled settings evaporated when tested against the messy diversity of actual search behavior.

If you have read studies showing that a particular GEO tactic improved visibility by some percentage, there is a reasonable chance that improvement was measured on a benchmark that SAGEO Arena would classify as unrealistic. Be skeptical of any study or case study that does not describe its query methodology in detail.

6. Most GEO Wins Are Within Measurement Noise

Don't Measure Once (April 2026) may be the most underappreciated paper in this list. Its finding: GEO measurement is fundamentally unstable. The same content, the same query, a different result on each run. Single measurements are unreliable. You need multiple runs and averages before any signal emerges from the noise.

The implication is harsh: most GEO wins reported in case studies are within measurement noise. Someone tries a new tactic, runs a query, sees their brand mentioned, and declares victory. That is not a result. That is a sample size of one from a stochastic system.

This is the core reason that AI search volatility deserves more attention than it gets. The engines themselves are non-deterministic. Model updates happen without announcement. If your measurement approach cannot account for that variance, you are not measuring GEO performance. You are measuring noise.

BabyPenguin runs multiple query iterations and aggregates results precisely because single-run snapshots are meaningless. Tracking your AI search presence requires statistical thinking, not one-off spot checks.

7. LLM-Based Rewriting Works in Controlled Settings, Not Real Search

White Hat SEO using LLMs (February 2025) tested whether LLM-based document rewriting could improve rankings. In controlled competition settings, it could. The researchers also found that pairwise comparison context, letting the model see what competing documents look like, helped guide optimization in useful directions.

The important caveat: this worked in controlled competitions, not in real search engine conditions. Controlled settings remove the variance, the model updates, and the competitive dynamics that define real AI search environments.

The pairwise comparison insight is genuinely useful though. Understanding what your competitors look like to an AI system, not just whether you outrank them, is a different and more meaningful frame. That is what competitor comparison in AI search actually requires: knowing how models characterize your category and where your content fits in that picture relative to alternatives.

8. Training-Level Optimization Is Powerful and Impractical

RLRF: RL from Ranker Feedback (October 2025) showed that training LLMs via reinforcement learning with ranker feedback can produce content that outranks competitors, and that the approach generalizes to unseen ranking functions. This is genuinely impressive research.

It is also completely impractical for most organizations. It requires fine-tuning LLMs, which means compute costs, ML expertise, and ongoing maintenance. This is a research result that demonstrates what is theoretically achievable, not a playbook anyone outside a well-resourced AI lab can follow today.

What it does confirm: there are real signals LLMs use to rank content. The challenge is surfacing those signals without access to training infrastructure. That is where external measurement tools and systematic testing fill the gap.

What the Research Actually Tells You to Do

Across these eight studies, a consistent picture emerges.

Quality beats manipulation, consistently. Every study that found durable positive results pointed to the same underlying factors: accuracy, user intent alignment, social proof, and competitive differentiation. These are not tactics you apply after writing. They are properties of the content itself.

Most specific tactics are noise or negative. Storytelling framing, keyword stuffing, authority signals without substance: these either do not move the needle or actively push you down.

Measurement instability is a genuine problem. If you are not running multiple queries and averaging results, you are measuring noise. Any GEO tracking approach that does not account for non-determinism is giving you false confidence.

Citations do not equal influence. The r = 0.019 correlation is the number you should share with anyone who thinks tracking citation counts is sufficient. Being referenced in an AI answer and shaping that answer are different outcomes entirely.

Systematic learning beats guesswork. AutoGEO achieved 35.99% improvement not through better tactics but through better feedback loops. Ongoing measurement and iteration beat any one-time optimization, and cross-engine tracking matters because preferences differ across ChatGPT, Gemini, and Grok.

Treat manipulation tactics as a category to actively avoid: the C-SEO Bench and SAGEO Arena results show they backfire. Close content gaps based on what is actually missing from AI responses about your category. Measure correctly, with multiple runs and cross-engine comparison. And remember that structural improvements only produce actionable data if the measurement methodology accounts for variance.

The teams winning in AI search are the ones building feedback loops and improving content quality, not the ones running tactics from a checklist. That is slower work. It is also the work that compounds.