How to Format FAQs So AI Search Engines Use Them as Sources

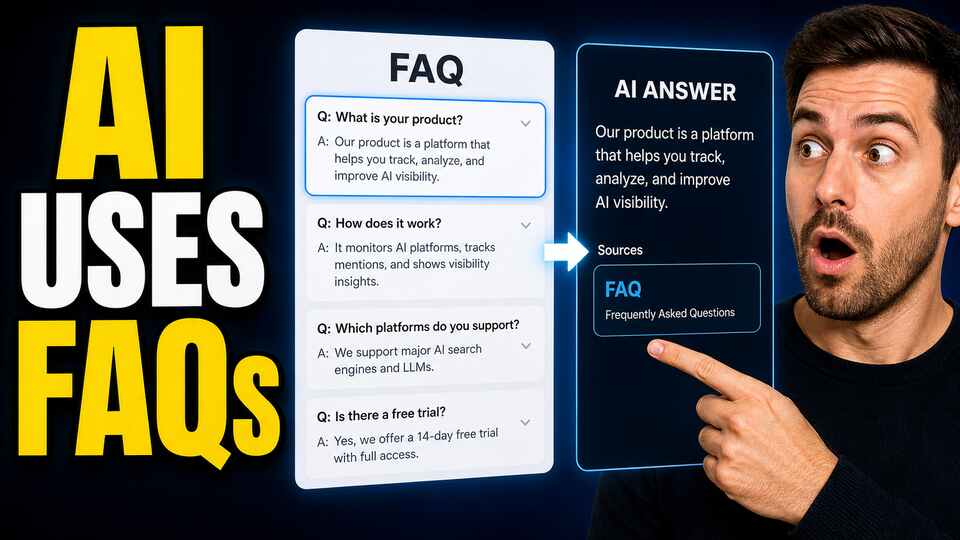

FAQs are one of the most underused weapons in GEO. They're easy to write, easy to maintain, and they map almost exactly to the way people now query AI engines, short conversational questions with specific answers. And yet most teams treat their FAQ section as an afterthought: a couple of bulleted questions at the bottom of a product page, with no schema, no structure, and no thought given to how an AI extractor would actually pull from them.

That's leaving citations on the table. Done right, FAQs are one of the highest-impact AEO formats you can ship. FAQ content maps directly to how users query AI engines, and FAQPage schema gives those engines a clean, unambiguous map for extracting answers. Here's how to structure them properly.

Use FAQPage schema. Don't skip it.

The single most important rule is also the most commonly skipped: every FAQ section needs FAQPage schema in JSON-LD. Without it, you've written content the AI engine has to guess at. With it, you've written content the AI engine can extract perfectly.

Despite how easy this is to implement, FAQPage and Q&A schema currently appear on only about 10.5% of AI-cited pages. That's a wide-open opportunity. Adding schema doesn't require new content, new design, or new copy, just a JSON-LD block that mirrors the visible questions and answers on the page.

The basic structure looks like this:

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "What is AI visibility?",

"acceptedAnswer": {

"@type": "Answer",

"text": "AI visibility measures how often your brand appears in AI-generated answers across ChatGPT, Gemini, Perplexity, and similar engines."

}

}

]

}One critical detail: the visible questions and answers on the page must match the schema markup exactly. Schema that says one thing while the page shows another is a flag for both Google's rich results validator and AI extractors that compare the rendered content to the structured markup.

Hit the right answer length

The answer length that performs best for AI extraction is narrow: 40-60 words per answer. This isn't arbitrary, it's the sweet spot where the answer is long enough to provide useful context and short enough to be extracted as a single quotable unit.

The two failure modes:

- Answers under 30 words. Too thin. The AI gets a fragment without enough information to reuse, and citation rate drops sharply.

- Answers over 80 words. Too long. The AI has to either truncate or skip them entirely, because the extraction window doesn't accommodate that much text in one quote.

Aim for 50 words. Edit ruthlessly until each answer is dense, complete, and self-contained. If you find yourself needing more than 60 words, that's usually a sign the question is too broad, split it into two more specific questions.

Make every answer self-contained

The biggest mistake in FAQ writing for AI is referring back to context the user can't see. AI engines extract individual question-and-answer pairs independently of the rest of the page. If your answer says "as we discussed above" or "see the comparison table below," the AI can't follow the reference, and the answer becomes useless when extracted.

Every answer must work as a standalone unit. It must contain all the context needed to understand it. Test this by reading each answer in isolation, with no surrounding context, and asking whether it still makes sense. If it doesn't, rewrite it to fold the missing context inline.

Choose the right number of questions

The recommended FAQ count for pillar content is 5-10 questions. Fewer than 5 and the section provides too little signal for the AI to consider it a real FAQ resource. More than 10 and the section becomes overwhelming for human readers, and the per-question authority signal starts to dilute.

Pick the 5-10 questions that real users actually ask about the topic. Mine your support tickets, sales call transcripts, and customer interview notes. The right questions are the ones that come up over and over in real conversations, not the ones that look comprehensive on a content brief.

Use natural-language question phrasing

Phrase your questions the way humans actually ask them, not the way SEO copywriters traditionally write them. "What is the difference between SEO and GEO?" is good. "SEO vs GEO Difference Explained 2026" is bad. The first phrasing matches how a user would actually type into ChatGPT or Perplexity. The second is keyword-stuffed junk that no real person would ever say out loud.

Two specific tactics:

- Start questions with the words real users use: "What is…", "How do I…", "Should I…", "Why does…", "When should I…"

- Include the entity name explicitly in the question. "How does Notion handle permissions?" beats "Permissions explained" because it matches the way a user would prompt an AI for the answer.

Front-load the answer in every response

Even within the 40-60 word answer window, the first sentence matters most. Put the direct answer in sentence one, then use the remaining words for supporting detail. The AI extractor will sometimes pull only the first sentence, and if your first sentence is a buildup phrase, the citation will be useless.

Compare:

- ❌ "Many factors go into determining how AI engines pick sources, but the most common include authority signals, content freshness, and clear structure that makes information easy to extract."

- ✅ "AI engines pick sources based primarily on authority signals, content freshness, and structural clarity. The most-cited sources combine all three: high domain authority, content updated within the last 12 months, and clear question-answer formatting."

The second version's first sentence is itself a complete, quotable answer. The supporting sentence adds depth without being required. That's the structure that gets cited.

Match neutral, informative tone

FAQs that read as advertising get cited far less often than FAQs that read as documentation. AI engines prefer non-commercial sources for citation, and they detect promotional language quickly. Keep your FAQ tone neutral, factual, and free of marketing claims.

Specifically, avoid:

- Superlatives ("the best", "the leading", "industry-leading")

- Marketing taglines or product slogans

- Calls-to-action embedded in the answer ("Try it free today!")

- Comparisons that frame your product as obviously superior

If your answer could appear on a competitor's blog and still be true, it's neutral enough. If it only makes sense in your own marketing voice, it's too commercial.

Include data and external citations inside the answers

FAQs with specific data points and references to authoritative external sources outperform plain-text FAQs by a significant margin. Inside an answer, a sentence like "According to a 2026 study of 8,000 AI citations, 27% of ChatGPT citations come from Wikipedia" carries far more weight than the same claim without the source.

Where you can, include:

- Specific numbers and percentages

- Named studies, reports, or sources

- Date references to keep the answer feeling current

- Hyperlinks to authoritative sources for verification

This makes the AI engine treat your FAQ entry as a higher-credibility source, closer to an editorial citation than a marketing page.

Refresh FAQ content regularly

FAQs decay fast. Pricing changes, features ship, regulations update, and within a few months the answers in your FAQ section can be quietly wrong. AI engines downgrade stale content, especially in fast-moving categories.

The right cadence is roughly monthly for high-traffic FAQs and quarterly for everything else. Add a "Last updated" timestamp to each FAQ section. Bonus points if you note the actual change ("Last updated: April 2026, pricing reflects current plans") because date-stamped, change-noted content is exactly what AI engines look for as freshness signals.

Validate the schema before shipping

Before any FAQ page goes live, run it through Google's Rich Results Test. The validator catches the most common mistakes: schema that doesn't match the visible content, missing required fields, and structural errors that prevent the schema from being parsed. A FAQ section with broken schema is functionally invisible to AI engines, no matter how good the content underneath is.

The compounding effect

FAQs are uniquely well-suited to GEO because every question-answer pair is itself a tiny standalone piece of content with its own citation potential. A page with 10 FAQ entries is effectively 10 different citation candidates, and a single page can end up cited for 5 or 6 different prompts, each tied to a different FAQ question.

That compounding is hard to get from any other content format. It's also one of the cheapest forms of GEO content to maintain. Once you have the structure, the schema, and the writing rules in place, expanding FAQ coverage is faster than producing any other long-form content, and the citation returns continue to accumulate over months.

Treat FAQs as first-class content, not as an afterthought. Schema them properly. Write them tight. Refresh them often. They'll earn more AI citations per word than almost anything else on your site.