How to Evaluate the Quality of Citations in AI Answers

"My brand was cited in a ChatGPT answer" sounds like a win. Sometimes it is. Sometimes it isn't.

Citations in AI answers come in wildly different qualities. A citation from Wikipedia carries different weight than a citation from a personal blog. A citation that supports an accurate claim is gold; a citation that supports a hallucinated claim is a liability. A citation in Perplexity, where users actually click through to verify, is worth more than a footnote in a ChatGPT answer most users never inspect.

If you're tracking citations as part of your AI visibility strategy, you need to evaluate them, not just count them. Here's how to think about citation quality, and what to actually measure.

Start with how each engine handles citations differently

You can't evaluate citation quality without understanding that the major AI engines treat citations completely differently. The architecture matters.

Perplexity is the most citation-rich engine. It frequently displays inline citations to the sources it used, making verification straightforward and giving users an easy path to click through. A Perplexity citation is typically the highest-quality citation by impact, because it actually drives traffic and the user is invited to verify the claim.

Google Gemini typically shows source links in its generated answers, creating both opportunity and risk: visibility for cited publishers, but also a real chance users skip clicking through to the original content. Gemini citations matter a lot for visibility and somewhat less for direct traffic.

ChatGPT without plugins or browse mode "typically does not supply source links." Model-native answers, the default ChatGPT experience for most queries, synthesize from training data without exposing where the facts came from. When ChatGPT does cite sources (with browse, with custom GPTs, with retrieval augmentation), the citations behave more like Perplexity. When it doesn't, your "citation" is invisible to the end user even when you're effectively the source of the claim.

Claude recently added web search and now operates in two modes, model-native or retrieval-augmented, depending on query type. Citation visibility tracks the mode the model picks for each query.

The core architectural distinction is between model-native synthesis (generating from training data patterns) and retrieval-augmented generation (pulling from live sources, then synthesizing). RAG-based engines give better traceability and higher-quality citation experiences. Model-native engines are faster but require users to externally verify everything, and most don't.

This means a citation in Perplexity is structurally more valuable than the equivalent in default ChatGPT, even when both engines mention your brand. Your citation-quality framework has to weight engines differently.

Define what "quality" actually means for your goals

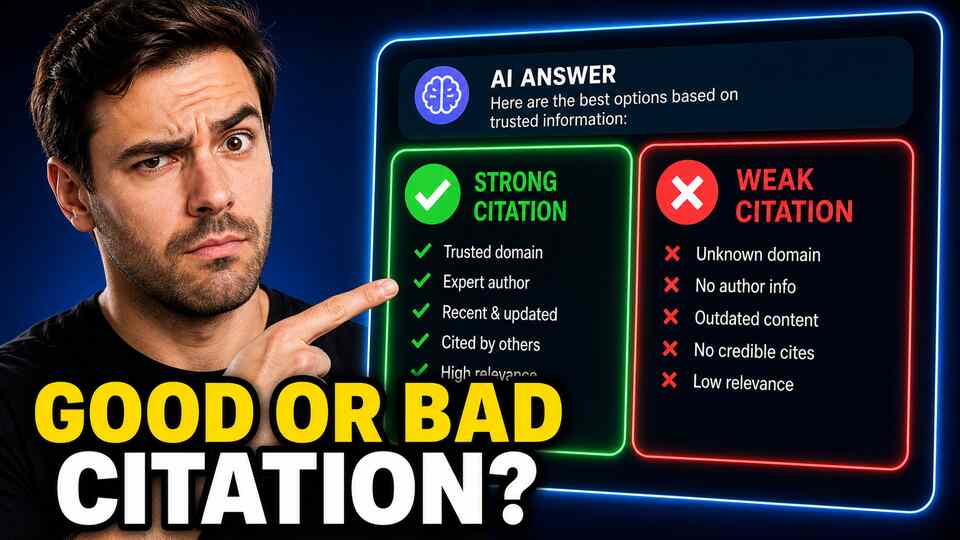

Citation quality isn't a single dimension. There are at least four separate things people mean when they say a citation is "high quality", and they don't always move together:

- Authority quality, the citing AI's judgment that this source is trustworthy. A citation from a model that already trusts your domain (high backlink authority, strong E-E-A-T signals, frequently retrieved) is more durable than a one-off mention from a fragile source.

- Relevance quality, how well the cited URL actually matches the user's question. A citation to your homepage when the question was about a specific feature is a low-relevance citation, even though it counts.

- Accuracy support, whether the citation is being used to back up a true claim or a hallucinated one. If the AI invented something and pinned your URL to it, that's a brand risk, not a brand win.

- Click-through value, whether the citation drives real traffic. Inline, prominent citations in Perplexity drive measurable visits; buried, footnoted citations in some other engines drive almost none.

Most teams collapse all four into "we got cited." Don't. Pick the dimensions that matter most for your goals, usually accuracy and click-through value for marketing teams, authority and relevance for SEO and content teams, and score citations on those specific axes.

Build a simple grading system

You don't need an enterprise tool to start grading citations. A simple weekly review of your top 50 cited URLs is enough. For each citation, ask three questions:

- Is the cited URL the right page for the question? If a user clicked the citation, would they get the answer they expected? (Y/N)

- Is the AI using the citation to support an accurate claim about your brand? (Y/N)

- Is the cited page in good shape today? Is the content current, well-formatted, and aligned with how you want to be described? (Y/N)

Three yeses is a high-quality citation. Two yeses means the citation is working but could be improved. One or zero means the citation is actively hurting you, either the URL is wrong, the AI is misrepresenting you with it, or the cited page is so stale that landing visitors will be disappointed.

You'll be surprised how many "wins" turn out to be 1-of-3 once you score them.

Watch for hallucinated citations

One of the most overlooked categories of low-quality citation is the hallucinated citation, where the AI confidently cites a URL that doesn't exist, doesn't match the claim, or doesn't actually contain the content the model says it contains. This happens more often than people think, especially in older or smaller AI engines. When it happens to your brand, a user who clicks the citation finds something that doesn't match the AI's claim, and the trust loss attaches to you, not the AI.

Audit a sample of your citations every month by actually clicking them. Verify that the URL exists, that the page is on the topic the AI claimed, and that the content supports (rather than contradicts) the AI's framing. It's tedious. It's also the only way to catch hallucinated citations before customers do.

Track citation source mix, not just citation count

A healthy citation profile has source diversity. If 90% of your citations come from your own domain, you're fragile, a single algorithm change can wipe you out. If your citations are spread across your owned content, two or three authoritative third-party domains, your Wikipedia article, and a couple of well-regarded review sites, you're durable. A model update has to eat through several pillars before your visibility collapses.

For each tracked prompt, look at the distribution of cited domains. If one domain is doing 80% of the work, you have a concentration risk. If your owned domain is missing entirely, you have an opportunity, your own content should be one of the top sources for your own brand. If a competitor's content is being cited as a source about you, that's an urgent gap.

Use the right tools for the audit

Several AI visibility platforms now track citation analytics, surfacing which domains AI engines rely on, which competitor domains dominate the answers, and how citation patterns shift over time. The core capability you want is:

- Per-prompt source listing, for any tracked prompt, see which URLs were cited and from which domains

- Aggregate source ranking, across your full prompt set, see which domains are most cited, sorted by frequency

- Trend lines, see whether a given domain is rising or falling as a cited source for your category

- Competitor source overlap, which sources cite your competitors that don't yet cite you

That last one is the highest-leverage view. Once you know which domains the AI trusts in your category, you have a target list for outreach, guest posting, and earned media. Citation quality goes up not just by improving your own content, but by getting cited from the right places.

Citations are content infrastructure, not vanity metrics

The biggest mindset shift in citation quality work is treating citations as infrastructure rather than as wins. A citation is a load-bearing piece of your AI visibility, and just like any load-bearing piece, you have to inspect it, maintain it, and replace it when it breaks. A bad citation is worse than no citation. A great citation, properly evaluated and reinforced, can keep working for years.

Grade them. Audit them. Diversify them. Replace the bad ones.

Related: How to Track AI Citations Over Time and Spot the Trends That Matter.